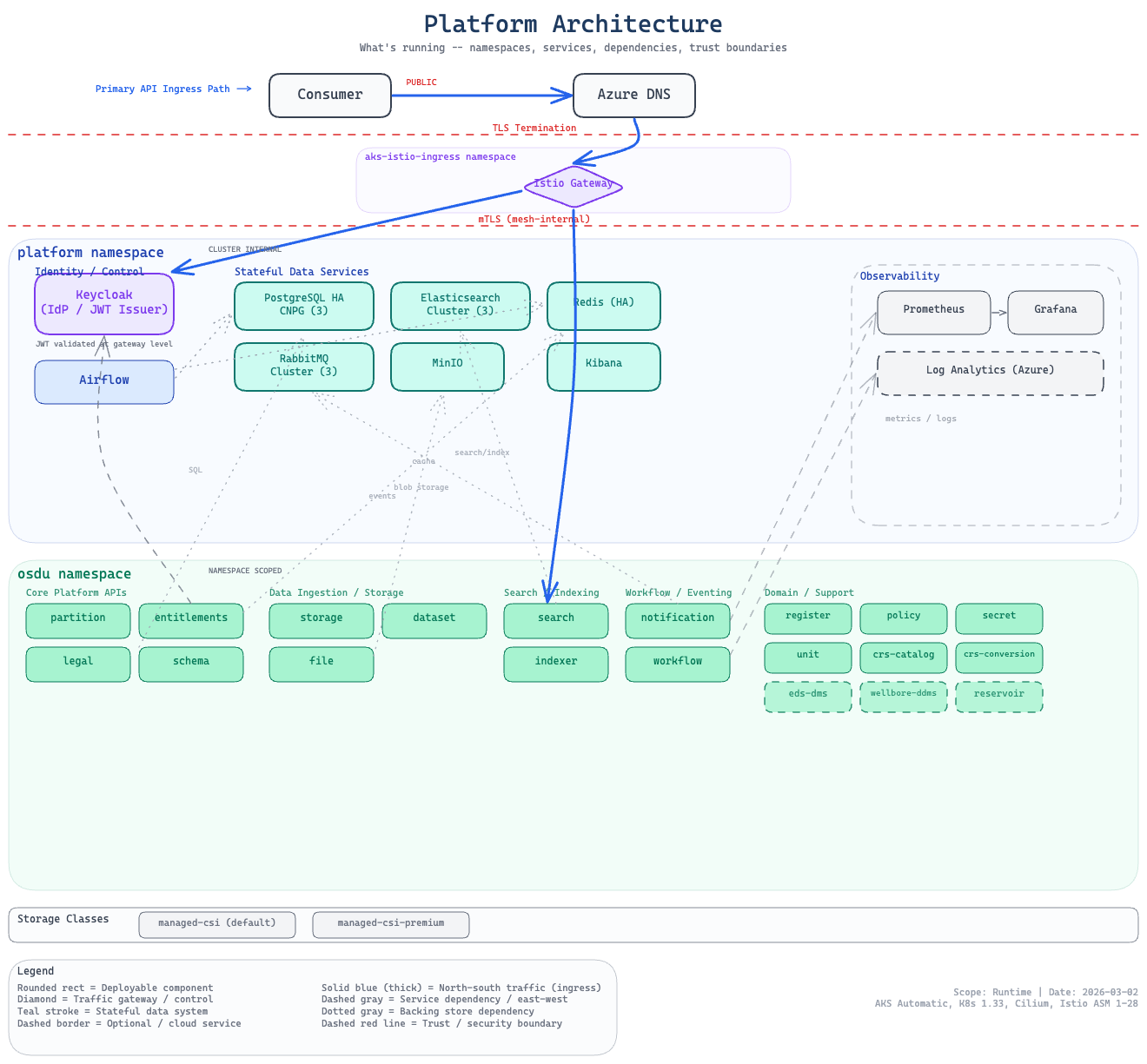

Platform Services

The platform layer deploys all middleware into the platform namespace. These components provide the data, messaging, identity, and networking foundation that OSDU services depend on.

Component Summary

| Component | Version | Storage | Enable Flag |

|---|---|---|---|

| Elasticsearch | 8.15.2 | 3x 128Gi Premium SSD | enable_elasticsearch |

| Kibana | 8.15.2 | — | (with Elasticsearch) |

| PostgreSQL (CNPG) | 17 | 3x 8Gi + 4Gi WAL | enable_postgresql |

| RabbitMQ | 4.1.0 | 3x 8Gi Premium SSD | enable_rabbitmq |

| Redis | 8.2.1 (Bitnami chart 24.1.3) | 1x master 8Gi + 2x replica 8Gi | enable_redis |

| MinIO | RELEASE.2024-12-18 (chart 5.4.0) | 10Gi managed-csi | enable_minio |

| Keycloak | 26.5.4 | — (uses PostgreSQL) | enable_keycloak |

| Airflow | chart 1.19.0 | — (uses PostgreSQL) | enable_airflow |

Foundation-layer components

cert-manager (v1.19.3), ECK operator (v3.3.0), and CNPG operator (v0.27.1) are deployed in the foundation layer (software/foundation/), not the stack. They are cluster-wide singletons shared across all stacks. See Deployment Model for the layer architecture.

All components default to enabled except Airflow. See the enable_* variables in software/stack/variables-flags-*.tf for the complete list.

Elasticsearch Cluster

Architecture: 3-node cluster with combined master/data/ingest roles, managed by the ECK operator (v3.3.0, deployed in the foundation layer).

Storage: Custom StorageClass with Premium LRS and Retain policy:

provisioner: disk.csi.azure.com

parameters:

skuName: Premium_LRS

cachingMode: ReadOnly

reclaimPolicy: Retain

volumeBindingMode: WaitForFirstConsumer

Internal endpoint: elasticsearch-es-http.platform.svc.cluster.local:9200

Probe workaround: The ECK Helm chart does not expose probe configuration. A Helm postrenderer injects tcpSocket probes on the webhook port (9443) during deployment (see ADR-0004).

Selector workaround: ECK creates services with overlapping selectors, violating AKS K8sAzureV1UniqueServiceSelector. ECK's native service selector overrides differentiate them (see ADR-0011).

Elastic Bootstrap

Post-deploy Job that configures index templates, ILM policies, and aliases required by OSDU services. Runs after Elasticsearch is healthy and pulls credentials from the elasticsearch-es-elastic-user secret.

PostgreSQL (CloudNativePG)

3-instance HA PostgreSQL cluster managed by the CNPG operator with synchronous replication.

Architecture: 1 primary (read-write) + 2 sync replicas (read-only), spread across 3 availability zones.

Configuration:

| Setting | Value |

|---|---|

| Operator | CNPG chart cloudnative-pg v0.27.1 |

| Instances | 3 (synchronous quorum: minSyncReplicas: 1, maxSyncReplicas: 1) |

| Databases | 14 separate databases (one per OSDU service), matching ROSA topology |

| Storage | 8Gi data + 4Gi WAL per instance on pg-storageclass (Premium_LRS, Retain) |

| Read-write | postgresql-rw.platform.svc.cluster.local:5432 |

| Read-only | postgresql-ro.platform.svc.cluster.local:5432 |

CNPG probe exemption: CNPG creates short-lived initdb/join Jobs that cannot have health probes. The exemption is bootstrapped separately in infra-access/main.tf so core infrastructure can still provision with Contributor rights (see ADR-0007 and ADR-0020).

See ADR-0014 for the ROSA-aligned database model.

Redis

Bitnami Redis chart providing an in-memory cache layer for OSDU services.

Architecture: 1 master + 2 replicas using Bitnami's Redis Helm chart (v24.1.3). Master handles writes; replicas provide read scalability and failover.

Configuration:

| Setting | Value |

|---|---|

| Chart | bitnami/redis v24.1.3 |

| Image | Redis 8.2.1 |

| Master | 1 pod, 8Gi PVC |

| Replicas | 2 pods, 8Gi PVC each |

| Auth | Password from redis-secret |

Internal endpoint: redis-master.platform.svc.cluster.local:6379

Redis is primarily used by the Entitlements service for caching authorization group lookups. Other OSDU services may use it for session or response caching. The Bitnami chart includes Sentinel support, but this deployment uses the standard master-replica topology.

Postrender patches: The Kustomize postrender adds resource requests/limits, seccomp profiles, and health probes to comply with AKS safeguards.

RabbitMQ

RabbitMQ cluster for async messaging between OSDU services.

Configuration:

| Setting | Value |

|---|---|

| Deployment | Raw Kubernetes manifests (StatefulSet, Services, ConfigMap) |

| Image | rabbitmq:4.1.0-management-alpine |

| Replicas | 3 (clustered via DNS peer discovery) |

| Storage | 8Gi per node on rabbitmq-storageclass (Premium_LRS, Retain) |

Internal endpoint: rabbitmq.platform.svc.cluster.local:5672

No Helm chart is used. See ADR-0005 for the rationale.

Istio opt-out

RabbitMQ pods opt out of Istio sidecar injection (sidecar.istio.io/inject: "false") because the RabbitMQ clustering protocol requires NET_ADMIN capabilities incompatible with the mesh sidecar. Authentication uses password-based security instead.

Keycloak

Identity provider for OSDU authentication and authorization, deployed as raw Kubernetes manifests (see ADR-0016).

Configuration:

| Setting | Value |

|---|---|

| Image | quay.io/keycloak/keycloak:26.5.4 |

| Deployment | Raw StatefulSet |

| Database | PostgreSQL (keycloak DB via CNPG bootstrap) |

| OSDU realm | Auto-imported at startup with datafier confidential client |

Access: External via Gateway API HTTPRoute when a hostname is configured (keycloak_hostname != ""). When no hostname is set, use kubectl port-forward to reach the admin console.

JWKS readiness gate: A null_resource.keycloak_jwks_wait ensures Keycloak is issuing valid tokens before OSDU services deploy.

Apache Airflow

Workflow orchestration for DAG-based task scheduling (see ADR-0012).

Configuration:

| Setting | Value |

|---|---|

| Chart | apache-airflow/airflow v1.19.0 |

| Image | apache/airflow:3.1.7 |

| Executor | KubernetesExecutor (pod per task, no persistent workers) |

| Database | PostgreSQL (airflow DB via CNPG bootstrap) |

Control-plane components run on the platform nodepool; task pods run on the default pool (scale-to-zero).

Note

Airflow defaults to disabled (enable_airflow = false) as it is not yet integrated with OSDU DAGs.

MinIO

S3-compatible object storage for development and testing.

Configuration:

| Setting | Value |

|---|---|

| Chart | minio/minio v5.4.0 |

| Mode | Standalone (single pod) |

| Storage | 10Gi managed-csi PVC |

Internal endpoints:

- API:

minio.platform.svc.cluster.local:9000 - Console:

minio.platform.svc.cluster.local:9001

cert-manager

Automatic TLS certificate management deployed in the foundation layer.

Configuration:

| Setting | Value |

|---|---|

| Chart | cert-manager v1.19.3 |

| Gateway API | Enabled (--enable-gateway-api flag) |

| ClusterIssuers | Let's Encrypt production + staging |

| Challenge type | HTTP-01 via Istio Gateway |

cert-manager watches for Certificate resources created by the gateway module and provisions Let's Encrypt TLS certificates automatically. Each external endpoint (OSDU API, Kibana, Keycloak, Airflow) gets its own Certificate and corresponding Secret.

The HTTP-01 challenge solver routes through the Istio ingress gateway, meaning cert-manager requires the gateway to be functional before it can complete certificate issuance. Staging certificates are used during development to avoid Let's Encrypt rate limits.

ClusterIssuer configuration:

apiVersion: cert-manager.io/v1

kind: ClusterIssuer

metadata:

name: letsencrypt-production

spec:

acme:

server: https://acme-v02.api.letsencrypt.org/directory

privateKeySecretRef:

name: letsencrypt-production

solvers:

- http01:

gatewayHTTPRoute:

parentRefs:

- name: gateway

namespace: aks-istio-ingress

See also

- Deployment Model — layer architecture and lifecycle scripts

- Service Architecture — how OSDU services consume these middleware components

- Traffic & Routing — Gateway API ingress and DNS configuration

- Security — Istio mTLS, pod security, and deployment safeguards